2 / 18 -- Fault Tolerance for Mobile Devices

|  |

4 / 18 -- Constraints Imposed on the Storage Layer

- Scarce Resources

- (energy, storage, CPU)

- Short-lived and Unpredictable Encounters

- Lack of Trust Among Participants

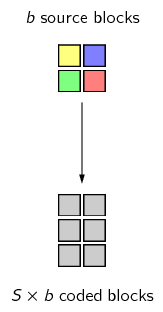

5 / 18 -- Maximizing Storage Efficiency

- Single-Instance Storage

- Generic Lossless Compression

- Techniques Not Considered

6 / 18 -- Chopping Data Into Small Blocks

- Natural Solution: Fixed-Size Blocks

- Finding More Similarities Using Content-Based Chopping

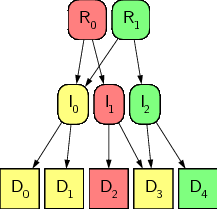

7 / 18 -- Providing a Suitable Meta-Data Format

|  |

8 / 18 -- Providing Data Confidentiality, Integrity, and

Authenticity

- Enforcing Confidentiality

- Allowing For Integrity Checks

- Allowing For Authenticity Checks

9 / 18 -- Enforcing Backup Atomicity

- Comparison With Distributed and Mobile File Systems

- Using Write-Once Semantics

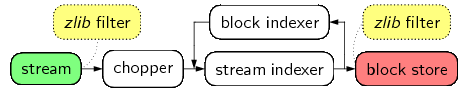

11 / 18 -- Our Storage Layer Implementation: libchop

- Key Components

- Strong Focus on Compression Techniques

13 / 18 -- Algorithmic Combinations

| Config. | Single Instance? | Chopping Algo. | Expected Block Size | Input Zipped? | Blocks Zipped? |

|---|---|---|---|---|---|

| A1 | no | --- | --- | yes | --- |

| A2 | yes | --- | --- | yes | --- |

| B1 | yes | Manber's | 1024 B | no | no |

| B2 | yes | Manber's | 1024 B | no | yes |

| B3 | yes | fixed-size | 1024 B | no | yes |

| C | yes | fixed-size | 1024 B | yes | no |

14 / 18 -- Storage Efficiency & Computational Cost Assessment

| Config. | Summary | Resulting Data Size | Throughput (MiB/s) | ||||

|---|---|---|---|---|---|---|---|

| C files | Ogg | mbox | C files | Ogg | mbox | ||

| A1 | (without single instance) | 26% | 100% | 55% | 21 | 15 | 18 |

| A2 | (with single instance) | 13% | 100% | 55% | 22 | 15 | 17 |

| B1 | Manber | 25% | 102% | 88% | 12 | 6 | 15 |

| B2 | Manber + zipped blocks | 11% | 103% | 58% | 7 | 5 | 10 |

| B3 | fixed-size + zipped blocks | 18% | 103% | 71% | 11 | 5 | 18 |

| C | fixed-size + zipped input | 13% | 102% | 57% | 22 | 5 | 21 |

15 / 18 -- Storage Efficiency & Computational Cost Assessment

- Single-Instance Storage

- Content-Defined Blocks

- (Manber)

- Lossless Compression

16 / 18 -- Conclusions

- Implementation of a Flexible Prototype

- Assessment of Compression Techniques

- Six Essential Storage Requirements

17 / 18 -- On-Going & Future Work

- Improved Energetic Cost Assessment

- Algorithmic Evaluation

- Design & Implementation